Artificial Intelligence

Get familiar with the university’s AI regulations and read the relevant student best practices. Then complete the related AI questionnaire between 11 and 31 May for a chance to win one of three one-day tickets to Sziget Festival!

Artificial intelligence (AI) is fundamentally transforming the way we learn, teach, and evaluate performance, and is also influencing the development of various scientific fields and professions. In order to prepare for the challenges of the future, all students need to learn how to use AI-based tools, such as text generation and conversation systems consciously – i.e., ethically, effectively, and sustainably – so that their use is an advantage rather than a disadvantage. The educational application of AI requires independent thinking, a responsible attitude, and ethical and rule-abiding behaviour.

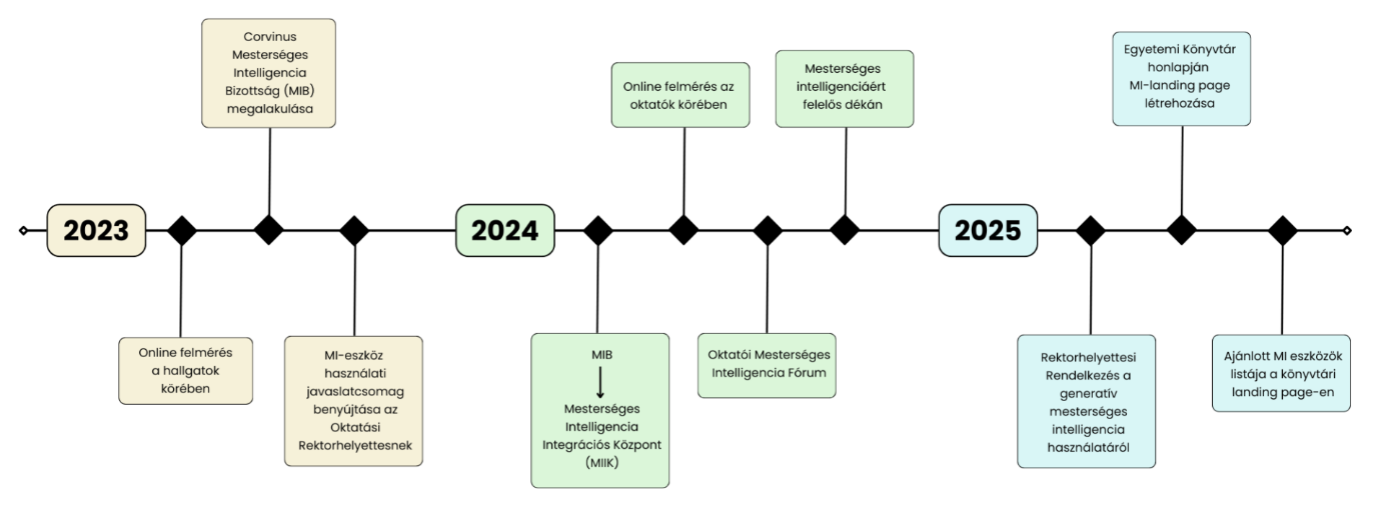

Regulation of GenAI use – the 6/2025 VRAP Provisions

Since September 15, 2025, the Vice-Rector for Education’s 6/2025 Regulation “On the Use of Generative Artificial Intelligence Systems in Education” has been in effect. This AI regulation defines the obligations of teachers and students regarding AI and the rules to be followed in connection with the Study and Exam Regulations.

Information on the interpretation of the regulation can be found in the FAQ (at the bottom of the page), and you can learn more about the main points here: Provision 6/2025

Presentation by the Dean for Artificial Intelligence to students September 2025:

Artificial Intelligence regulations at Corvinus University

Videos:

AI Framework Regulations

In the fall of 2025, Corvinus’s unified AI regulations came into effect, 12/2025 “Executive Committee Regulation on the Use of Artificial Intelligence Systems.” This Regulation lays down the values, principles, and standards of conduct applicable to all types of AI and to all university activities . The above Regulation, supplemented by Regulation 6/2025 VRAP, provides a solid foundation for the ethical, effective, and sustainable use of AI in learning. 12/2025 VB Regulation

Thesis principles and subject guidelines

In accordance with the relevant VRAP Decree, the use of AI in the preparation of theses or dissertations may vary from programme to programme and must be communicated to graduating students in the thesis guidelines (or in an appendix thereto) for the given programme. Information on the necessity and form of the AI usage statement must also be provided there. Please look for the thesis guidelines on your programme’s website (links to programmes – login required – BA: https://www.uni-corvinus.hu/post/landing-page/jelenlegi-hallgatoinknak/?lang=en#bachelors MA: https://www.uni-corvinus.hu/post/landing-page/jelenlegi-hallgatoinknak/?lang=en#masters; Specialized Further Education: https://www.uni-corvinus.hu/post/landing-page/jelenlegi-hallgatoinknak/?lang=en#postgraduate) – or ask the programme leader or advisor.

Sample statements

In connection with the preparation of a thesis or diploma project, the department may require a statement regarding the use of Generative AI (GenAI). This may be brief or detailed, depending on the characteristics of the program. Regulation 6/2025 VRAP provides samples for each case, but information on this may also be found in the thesis guidelines. Regardless of this, the university’s Neptun login page under “Downloadable Documents” (https://neptun3r.web.uni-corvinus.hu/hallgatoi/login), you can select the appropriate Word file. The file contains a few short and one detailed ethical statements. Insert the appropriate one into your thesis without signing it, as required in the thesis guidelines for your department. Please note that if your department has specific requirements, you must also provide more detailed information in the text of your thesis.

GMI fenntarthatósági kihívásai

magyarázat

link

BEST PRACTICES AND GUIDES

How to approach AI in learning

The informed use of artificial intelligence is a part of digital competence and is increasingly expected to become a labour market requirement; therefore, it is recommended that students develop adequate knowledge in this area.

At the same time, the applicability of artificial intelligence is not always straightforward, and its use may also involve risks, so it is important for all students to become familiar with those risks as well.

We ask students to familiarize themselves with and respect the rules and regulations related to the use of artificial intelligence, not only at the university level, but also within their field of study.

In relation to your specific field of study, we recommend that you reflect on your goals in the learning process and on the areas in which you would like to improve.

Then, look for AI tools that will support these goals – but before using them, be sure to check that they are in alignment with the rules and values of the given course or program.

On this institutional AI website, you will find detailed support materials on ethical use, tool selection, effective application, and more.

AI Best Practices

The following best practices present usage patterns that, from a student perspective, demonstrate the conscious, ethical, responsible, critical, and legally compliant use of artificial intelligence in learning and in completing academic tasks. In all cases, the very first step is to become familiar with the regulatory frameworks of the broader environment (e.g. the EU, the country, the sector, the university, the program, and the course). To support this, it is advisable to ask the instructor of the relevant course – or, in the case of a thesis, the supervisor or program coordinator – to assist in clarifying the framework regulations related to the use of AI.

| Best practice | Risks |

| Students may use AI to rephrase complex concepts or to provide practical examples, while a challenging theoretical source remains the primary reference. | AI may produce oversimplifications or inaccuracies (“hallucinations”) |

| Students may ask AI to explain the same topic at multiple levels, tailored to their own knowledge level, learning capacity, and learning style (e.g. more analytical or more visual), thereby continuously supporting deeper understanding. | Oversimplification may lead to inaccuracies; therefore, it is important to compare final conclusions with the original academic literature. |

| Students may input unstructured notes (e.g. university lecture notes or notes/transcripts from online meetings), from which AI can generate a thematic summary, highlighted key points, or a concept map. | If the input contains personal data or copyrighted learning materials (e.g. a textbook), data protection issues may arise. The model may also “overinterpret” the content, and critical perspectives may be omitted. |

| Students may use AI to create self-assessment tasks, short quiz questions, or multiple-choice tests based on the uploaded learning materials. | Overly simple or incorrect questions may mislead the learner; sharing copyrighted content may also pose a problem, as may cases where, based on source materials that explain concepts in multiple ways, the AI does not provide appropriate answers. |

| AI can generate different roles and creative scenarios, the analysis of which supports the development of students’ thinking skills. | AI may generate cultural information based on stereotypes, which can present a distorted picture of certain groups. The scientific grounding of the scenarios may be insufficient. |

| Students may rehearse interview situations (e.g. practicing behavioral or technical interview questions) or oral examination scenarios. | AI may occasionally expect overly idealized or unrealistic responses. |

| AI can be used to practice the interpretation of statistical results. | AI may occasionally provide overly general or misleading interpretations, taken out of context. |

| The AI model can generate counterarguments that students are required to refute. | AI does not always indicate which arguments are debatable or scientifically unsound. |

| AI helps to understand how two theoretical models or concepts are related. | AI tends to create relationships that appear logical but are professionally inaccurate. |

| Through AI-generated examples, students can analyze social and algorithmic biases. | Students need to be aware that the models themselves may also carry such biases. |

| Best practice | Risks |

| AI can help create outlines with logical structures and chapter headings. | If students mechanically adopt the generated content, their own reasoning and independent understanding of the topic as a whole may be undermined. |

| AI can be effectively used for text translation and for improving and refining spelling and style. | Students may become accustomed to translating entire sentences using AI instead of grappling with the text themselves, thereby hindering the development of their grammar, vocabulary, and independent communication skills. The risk of plagiarism and AI-generated content also increases. |

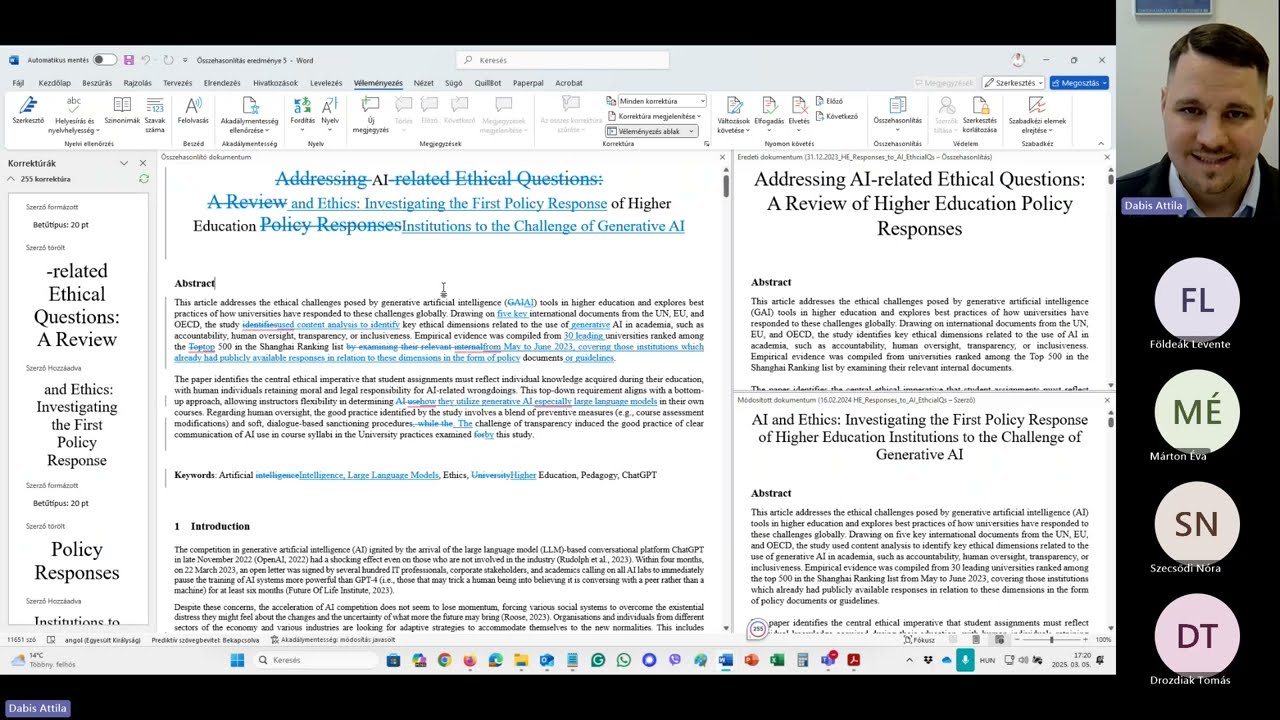

| It is advisable to use version-tracking software (e.g. Grammarly Authorship, Google Draftback) to monitor the development of longer, essay-type texts and to verify one’s own work. | These tools are not available on all platforms, and some of them are paid services. |

| AI can support the initial interpretation of foreign-language literature and the writing of texts. | During the interpretation of texts, parts of the context may be lost. Accurate source handling may be compromised, and AI may be prone to hallucinations. |

| Students may ask AI to evaluate and improve the formality and coherence of a text. | This may lead to an overly standardized style. |

| Best practice | Risks |

| Students may use AI to generate multiple possible research directions, but the final decision must be made on the basis of their own literature review. | The model may suggest non-existent articles or unrealistic research directions. |

| By identifying networks of relationships between articles and texts, as well as citation links, AI can help locate academic literature relevant to a research topic. | AI may also surface less relevant or lower-quality academic literature. |

| AI can assist in identifying research keywords, keyword combinations, and synonyms, which can lead to more precise and relevant literature searches. | Research focus may decrease. |

| AI can provide an overview of when it is appropriate to choose qualitative, quantitative, or mixed methodological approaches, and can assist in developing a more comprehensive research plan. | The model may sometimes provide overly general considerations, creating an appearance of professionalism without substantive methodological expertise. For concrete, current, and relevant research, professional consultation may be necessary. |

| AI can suggest topic categories, keywords, and database search strategies. | The model may generate false sources. |

| Students may ask the AI model to help identify possible operationalizations or to explore the practical applicability of research findings. | AI may provide suggestions that are unrealistic or not actually measurable. |

| AI can suggest code snippets (e.g. in R or Python), explanations, or debugging steps. | AI may recommend incorrect code or suboptimal statistical procedures. |

| Best practice | Risks |

| AI can assist with task breakdown, scheduling, or the creation of to-do lists. | Students may rely excessively on AI for organizing group dynamics. |

| Some AI applications are capable of generating audio-based summaries or even podcast-style audio materials, which can serve as effective preparation for a presentation. | The audio summary or conversation may be less structured. |

| With the help of AI, visual diagrams or illustrations can be created to better represent information and support easier comprehension. | AI-generated diagrams may sometimes be of low quality or insufficiently customizable. |

| AI can help build the logical flow of a presentation and suggest ideas for visuals, but the content of the slides must be validated by the students. | Overly simple, unprofessional visualizations and rhetorically appealing but substantively empty texts may be produced. |

AI Guides and International Guidelines

To better understand the background of the best practices, the university guide and the following international guidelines and regulations may provide useful support:

- University AI guide: https://www.uni-corvinus.hu/main-page/research/university-library/artificial-intelligence/ai-guides-tutorials/?lang=en

- Regulation (EU) 2024/1689 of the European Parliament and of the Council on artificial intelligence (EU AI Act): https://net.jogtar.hu/jogszabaly?docid=a2401689.eup#

- The relevant Hungarian regulation and national implementation of the EU AI Act: Act LXXV of 2025 (AI Act): https://njt.hu/jogszabaly/2025-75-00-00

- OECD Recommendation on Artificial Intelligence: https://legalinstruments.oecd.org/en/instruments/oecd-legal-0449

- OECD guidance on the effective and equitable use of artificial intelligence in education (OECD – Opportunities, Guidelines and Guardrails for Effective and Equitable Use of AI in Education): https://www.oecd.org/en/publications/oecd-digital-education-outlook-2023_c74f03de-en/full-report/opportunities-guidelines-and-guardrails-for-effective-and-equitable-use-of-ai-in-education_2f0862dc.html

- UNESCO guidance on the use of generative artificial intelligence in education and research (Guidance for Generative AI in Education and Research): https://www.unesco.org/en/articles/guidance-generative-ai-education-and-research

- UNESCO quick start guide on ChatGPT and artificial intelligence in higher education (ChatGPT and Artificial Intelligence in Higher Education – Quick Start Guide): https://unesdoc.unesco.org/ark:/48223/pf0000385146

- UNESCO reflections on generative artificial intelligence and the future of education (Generative AI and the Future of Education): https://unesdoc.unesco.org/ark:/48223/pf0000385877

- UNESCO Recommendation on the Ethics of Artificial Intelligence: https://www.unesco.org/en/articles/recommendation-ethics-artificial-intelligence

- European Commission guidelines for educators on the ethical use of artificial intelligence (Ethical Guidelines on the Use of AI and Data in Teaching and Learning for Educators): https://education.ec.europa.eu/news/ethical-guidelines-on-the-use-of-artificial-intelligence-and-data-in-teaching-and-learning-for-educators

TOOLS

List of recommended AI tools

The list of recommended AI tools has been professionally reviewed by the University’s AI Integrational Centre and approved by the AI Dean. The list is not exhaustive and is updated regularly (https://www.uni-corvinus.hu/downloads/cdj1.qwux7n/eng-list-of-ai-tools-recommended-by-cub-2026.pdf).

Institutional licenses are currently primarily held by faculty and staff. For students, institutional licenses are available for Grammarly EDU and, due to the Microsoft ecosystem, for Copilot’s closed system (which can be accessed with a Cusman ID).

The use of the tools is recommended only with public data. The entry of confidential, protected, or non-public data is prohibited. Users are obliged, if the given service allows it, to disable the use of data for model training purposes (opt out). The use of AI tools must always be in accordance with the University’s anti-plagiarism policy, and with the Vice-Rector’s Regulation for Education No. 6/2025 and Executive Committee Regulation No. 12/2025. The specific conditions for using AI tools in individual courses are determined by the instructor.

The AI tools recommended by the University can be classified into the following main functional categories:

- General conversational AIs‑ and LLMs (Large Language Models)

- Translation and language tools

- Academic writing support and paraphrasing tools

- Research assistants and literature search tools

- Document‑ and data extraction AIs

- Transcription, note-taking, and audio-based tools

- Visualisation and content creation tools

- AI ‑detectors (with limited reliability)

Examples of tools and their use cases:

| Device type | Example | Short description / Use case | Institutional license |

| General conversational AI | ChatGPT Team | Analysis, drafting, educational and research support in a team environment | Yes |

| LLM-based search engine | Perplexity | Question and answer based on scientifically focused sources | Not |

| Closed-system LLM | Microsoft Copilot | Document analysis and content creation in the Microsoft ecosystem, in a secure institutional environment. | Yes |

| Translation | DeepL | Foreign language translation. | Yes |

| Linguistic and stylistic corrector | Grammarly EDU | Grammar, stylistic and clarity improvement for students and teachers | Yes (also for students) |

| Paraphrasing | QuillBot | Paraphrasing text in an academic style while preserving meaning | Yes |

| Academic writing assistant | Writefull | Scientific language feedback with Word integration | Not |

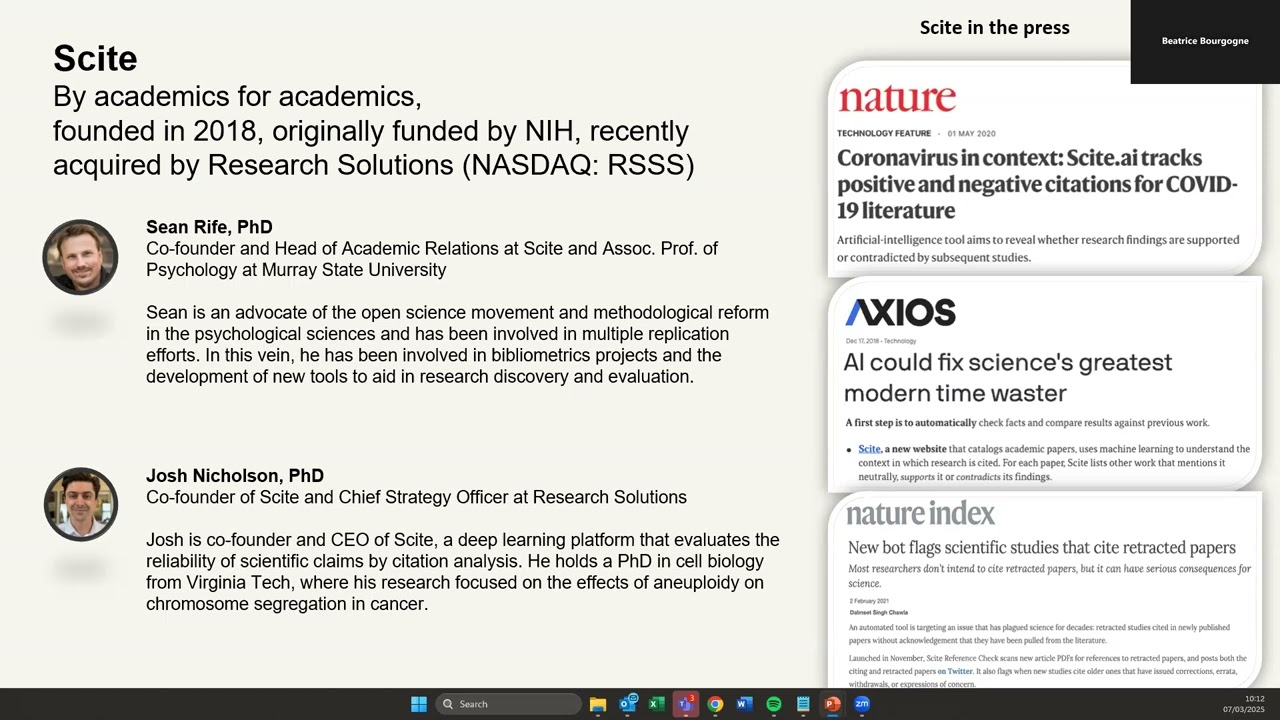

| Literature search assistant | Scite.AI | Analysing the context of quotes (supporting/contradictory references) | Yes |

| Literature visualiser | Connected Papers | Visual mapping of related publications | Not |

| Research Assistant | Elicit | Literature review and synthesis support | Not |

| Document analysis | ChatPDF | Analysing PDF content | Not |

| Note-taking and transcription | Alrite | Automatic transcription of lectures and discussions | Yes |

| Visualization | Napkin | Infographics and visual aids | Not |

| AI detector | GPTZero | AI-generated texts (for informational purposes only) | Not |

Online presentations about AI tools

Az online előadások linkjei itt érhetőek el.

Ethical challenges of generative AI tools

The ethical use of artificial intelligence requires careful checking, verification, and critical refinement of AI-generated outputs from multiple perspectives. AI responses should never be accepted uncritically. They must always be interpreted and assessed by the user. This includes an awareness of the data on which a given tool was trained and a critical evaluation of whether its outputs may reflect bias, distortion, or incomplete information.

In the case of generative AI, particular attention must be paid to authorship and academic integrity. AI-generated content must be reformulated in the user’s own words before publication. Presenting AI output as one’s own work is unethical. Responsibility for any published result always rests with the human author.

AI should therefore be used as a supportive partner rather than a substitute for human judgment and expertise. Moreover, reliance on a single AI system should be avoided. Using multiple tools in parallel can help users benefit from their differing strengths while mitigating individual limitations.

AI tools can and should be used for inquiry and exploration, but always in a conscious, reflective manner. Their outputs must be handled with caution, responsibility, and critical awareness.

Basic Generative AI training (in English, certificate is awarded by the Digital Education Council)

All members of the Corvinus University community have free access to the “AI Literacy for All” course developed by the Digital Education Council: https://connect.digitaleducationcouncil.com/login/microsoft/b97d20f8-fa4c-4149-b149-3e40e2f1feef . It presents the basics required for the ethical, effective, and sustainable use of artificial intelligence tools through six thematic modules. You can access the course with your Cusman ID and password (SSO solution). It is currently available in English, but a Hungarian version is in development.

Please note: Completion of this course is mandatory for all Master’s programs as part of the thesis and during the fall semester of the first year of undergraduate programs!

The course consists of several modules: completing all takes approximately 4-5 hours, and you must pass a test at the end of each module to proceed – at the end students receive a certificate (which you may use as proof of completion to fulfil relevant Corvinus requirements).

Moodle képzés

Az egyetem Moodle felületén is elérhető olyan, saját fejlesztésű MI képzés, amely videók és olvasmányok segítségével támogatja a generatív MI etikus, hatékony és fenntartható használatának elérését:

“LINK?”

Free training materials

- “AI Fluency” video training on Anthropic’s YouTube channel (which outlines the basics of generative AI in 12 episodes and approximately 70 minutes).

- The short training course “Elements of AI” specifically compiled by the University of Helsinki for students provides an enjoyable introduction to AI, focusing primarily on the basics of generative solutions (in English, takes appr. 5-6 hours altogether).

- Microsoft’s “Learn” service is available free of charge to all Corvinus students and staff (with Cusman login) – here you can choose from a wide range of AI courses, mainly focused on technology, in English (it is worth narrowing down the options by searching for “Artificial Intelligence”).

MI a Corvinuson

Az Egyetem szervezője az MI-vel kapcsolatos Tudományos rendezvényeknek, Konferenciáknak, Médiamegjelenéseknek, és a Tudománykommunikáció és tréningtevékenységek mellett aktív szereplő az MI gyakorlati felhasználását támogató innovációk területén is. Továbbá az egyetem kutatói rendszeresen publikálják MI-vel kapcsolatos kutatásaikat a legkülönfélébb témákban, például

MI-eszközigénylő űrlap

Az igényfelmérés célja, hogy az oktatók/Intézetek oktatási, kutatási munkájuk hatékonyabb ellátásához megigényeljék a szükségesnek vélt MI-eszközöket, majd az igénylés felmérése megvizsgálni az előfizetési lehetőségeket, költségeket.

Online előadások MI eszközökről

Az igényfelmérés célja, hogy az oktatók/Intézetek oktatási, kutatási munkájuk hatékonyabb ellátásához megigényeljék a szükségesnek vélt MI-eszközöket, majd az igénylés felmérése megvizsgálni az előfizetési lehetőségeket, költségeket.

FREQUENTLY ASKED QUESTIONS

Artificial intelligence is a set of technologies capable of performing tasks that mimic human thinking – for example, writing text, creating images, analysing data, or suggesting decisions. In reality, it generates responses by recognising patterns and on a probabilistic basis.

Three main types of AI are worth distinguishing:

Data-driven AI system: A business analytics solution built on computer models that uses AI technologies to process large volumes of data, recognise patterns, make predictions, optimise, and support decision-making. An example is a timetable-optimising algorithm that compiles the most efficient schedule based on available data.

Generative AI system (GenAI): A machine-based system, tool, or service designed to produce output content of a quality comparable to human problem-solving, taking into account the user’s questions, requests, and other input data (text, image, video). This includes large language models (LLMs) and standalone systems built on them (ChatGPT, Copilot, Gemini, or Claude), AI-based image generators (e.g. DALL·E, Midjourney), and solutions embedded in other software (e.g. GitHub Copilot).

Agent-based AI system: Agent-based artificial intelligence refers to AI systems capable of autonomously setting goals, making plans, and executing them. These digital agents do not merely follow programmed instructions but dynamically adapt to changing environments. An example is an AI agent that independently breaks down tasks – gathers information, checks the calendar, and prepares an email.

- Machine Learning (ML): A subfield of AI where algorithms ‘learn’ from data without being explicitly programmed.

- Deep Learning (DL): A subfield of machine learning that uses multi-layered neural networks to recognise complex patterns.

- LLM (Large Language Model): An AI system trained on vast amounts of text, capable of generating natural-language text (e.g. ChatGPT, Claude, Gemini).

- GPT (Generative Pre-Trained Transformer): A specific type of LLM on which ChatGPT and other tools are built.

- Prompt: The instruction or question given to the AI by the user.

- Token: A linguistic unit processed by the LLM (approximately the size of one syllable). Service providers often price usage based on tokens.

- Hallucination: When the AI confidently states something that is actually incorrect or non-existent (e.g. citing fabricated sources or generating false data).

Corvinus University supports the responsible use of artificial intelligence in accordance with the principles set out in Section 5 of Executive Board Resolution 12/2025:

- Openness – The University supports the conscious, responsible, ethical, sustainable, and transparent use of AI tools.

- Initiative – The University prepares its students for the changing technological environment.

- Professional and scientific integrity – Protecting the integrity of intellectual work is a shared responsibility.

- Human oversight and responsibility – Responsibility must always be assigned to natural or legal persons.

- Autonomy – Members of the community may freely decide on the use of AI tools within the framework of the rules.

- Transparency – The use of AI must be clearly indicated.

- Equal opportunity – AI tools must be accessible to everyone under the same conditions.

- Data protection – The use of AI must comply with data protection regulations.

- Responsibility – AI tools must not be used in a misleading or manipulative manner.

- Dialogue – Questions and concerns may be raised at any time with the course leader or programme directors, and if necessary with the AI Dean.

- Sustainability – AI tools must be operated in an environmentally, economically, and socially sustainable manner.

According to Section 3(2) of Executive Board Resolution 12/2025, the personal scope of the university regulation extends to the University’s employees, contractors, scholarship holders, and students. This FAQ was primarily prepared for students, but the principles apply to everyone.

Yes, but only with the permission of the subject leader and in compliance with the applicable rules. For each course, the course leader determines how and to what extent AI use is permitted, while adhering to university guidelines. You can find the rules in the course syllabus or in the instructor’s briefing during the first week of the course. The general university rules apply even if the course syllabus does not contain any restrictions! If there is no explicit prohibition, generative AI may be used, but you must declare its use and comply with the relevant rules. If in doubt, ask the instructor in writing!

It is prohibited if:

- the instructor or the task description explicitly prohibits the use of AI;

- you submit AI-generated text as if you had written it yourself;

- you fail to indicate that you used AI for any purpose or task during your work;

- you use AI during exams or closed assessments without permission.

These constitute violations of the plagiarism policy and may result in disciplinary consequences. During closed assessments (e.g. written exams, in-class tests, Moodle tests), the use of AI is fundamentally prohibited unless the instructor explicitly permits it.

According to Section 17(1) of the Vice-Rector for Education’s Resolution 6/2025: in the absence of an explicit prohibition declared by the course instructor, generative AI may be used for completing tasks requiring independent student work, within the framework of the regulations. In case of doubt, pursuant to Section 17(2), the student shall request written clarification from the course leader or instructor.

Important: Unauthorised AI use entails the legal consequences defined in the Study and Examination Regulations and the Plagiarism Policy.

In all cases, clearly and accurately indicate in the submitted work:

- which AI tool you used (e.g. ChatGPT 5, Claude 4.5);

- what you used it for (e.g. brainstorming, drafting an outline, literature research, programming, text editing, translation);

- to what extent it contributed to producing the result.

The Corvinus University AI regulation also provides a declaration template, which may be required by the instructor or other regulations to accompany the submitted material. For example, it is mandatory for theses.

- Submitting AI-generated text, images, video, or program code as your own work.

- Incorporating AI-generated material without citing the source.

- Misleading the instructor, reviewer, or fellow students about the origin of the work.

- Sharing the work, teaching materials, or personal data of other students or instructors with AI.

- Using AI for plagiarism, cheating, or facilitating such activities.

- Using AI for any other unethical activity.

You can use process-recording tools (e.g. DraftBack in Google Docs, track changes in Word), version control for coding (e.g. Git), or simply save multiple versions of your work to document your progress. These can help prove that the work was genuinely your own creation. But remember: work done with AI can also be an independent achievement if you properly document what you did.

Today’s generative AI is based on probability distributions generated from training data. The generative process consists of producing content that is highly likely to be coherent with the user input (prompt). It is important to know that current generative AI systems do not understand the words they generate. Image generators do not understand the physical structures they depict either.

The statistical foundation provides far-reaching coherence, but reliance on statistics sets limits on the reliability of generative AI. Seemingly plausible but incorrect output (so-called ‘hallucination’) occurs frequently. Therefore, always verify AI-generated information from reliable sources!

The person who uses AI-generated content is responsible for it. If you publish AI content (e.g. an assignment, presentation, social media post), you also assume responsibility for it.

An AI system has no legal personality, so no liability can be assigned to it. The compiler of the training data is also not liable for the output, as it is not foreseeable what result the AI will generate. Always check and validate AI output before using it! If you are unsure about something, it is better not to use it!

The output of an AI system does not create copyright for the system itself, since European copyright law is based on human creative activity. AI is not human, therefore it cannot be an author. The system’s manufacturer also does not acquire copyright over the output, as it does not participate with sufficient creativity in its creation.

The user may acquire copyright over the output if AI was merely used as a tool in their creative process, for example, if they provided a particularly creative prompt, or further developed the output (e.g. created a collage from it).

According to Sections 8 and 9 of Executive Board Resolution 12/2025, the fundamental risks associated with AI use include: unauthorised access to input data, public disclosure, and the protection of information security and personal data. Pursuant to Sections 19 (Protection of confidential information) and 20 (Protection of personal data), never enter:

- Personal data (your name, Neptun code, email address, phone number, photo)

- Other people’s personal data and copyrighted works

- Confidential research materials, unpublished papers

- The full text of assignments or theses

- Internal university or workplace documents

- Passwords, access credentials

- Financial or health information

What you enter into AI can potentially be read, stored, and used by service providers. Therefore, once something is entered into AI, it can no longer be considered confidential. Anonymisation alone is often not sufficient!

Yes. According to Section 26(1) of Executive Board Resolution 12/2025, the University publishes and updates on its website a list of verified and primarily recommended generative AI systems:

Recommended AI tools list (uni-corvinus.hu)

According to Section 27, the University may provide official, corporate, or group AI subscriptions for community members. Currently, Microsoft Copilot is available to students with a university email address. Pursuant to Section 28, the selection of AI tools is coordinated by the AI Dean, and their approval falls within the competence of the Executive Board.

If you use free tools, be aware that the service provider may use your data to develop the model further.

AI can help in many areas:

- Understanding course material: Simplifying complex concepts, preparing summaries and explanations

- Practice: Generating self-assessment questions, knowledge quizzes

- Time management: Creating study plans, scheduling tasks

- Language support: Processing foreign-language sources, translation

- Simulations: Practising interview situations, exams, presentations

- Accessibility: Assistance for non-native speakers or students with different abilities

- Developing writing skills: Feedback, structure suggestions

- Source finding: Support in locating academic materials on a given topic

The use of AI carries numerous risks:

- Inaccuracy and hallucination: AI frequently generates seemingly correct but false information

- Biases: AI may also reflect distorted data

- Copyright risks: It is not always clear what data the AI was trained on, so the content it generates may closely resemble others’ copyrighted materials

- Data protection risks: The data entered may be stored and used by the service provider

- Over-reliance: Critical thinking and independent learning may weaken

- Environmental impact: AI models involve significant energy and water consumption

- Academic integrity: Improper use may lead to disciplinary proceedings

- Risks should always be assessed in proportion to the nature of the task. The stakes of home practice are different from those of an exam or assignment.

The use of AI for such purposes is not recommended. Generative AI cannot replace human relationships and professional support.

AI tools:

- Cannot provide emotional nuance and clinical awareness

- May give inaccurate and potentially dangerous advice

- In crisis situations, may amplify harmful thoughts through feedback loops

- If you are struggling with difficulties, turn to the following services:

- Student services and learning support

- University psychological counselling

For effective AI use:

- Try several different tools – they may give different results for the same prompt

- Be aware that the same tool may give different answers to the same question

- AI outputs are not reproducible – every response is unique

- Don’t spend too much time trying different tools – manage your time

- AI models may be based on data that is months or years old

- Always verify information from reliable sources

- AI does not replace developing your own knowledge, skills, and critical perspective

- Always ask the AI to provide the exact sources it used so you can verify the accuracy of its claims

- Do not submit any claim or content that you could not defend or explain orally, because the instructor may ask follow-up questions!

Recommended training resources:

- Elements of AI (https://elementsofai.com) – free multilingual online course

- Coursera, edX, https://learn.microsoft.com – English-language AI courses

- Moodle course (maintained by the Centre for Educational Quality Development and Methodology)

- Library workshops and training (search the Library website: https://www.uni-corvinus.hu/main-page/research/university-library/?lang=en)

Course leader, instructor:

- How should the information about AI use in course syllabi be interpreted?

- Why can or cannot AI be used in specific courses and assignments?

- Administrative questions (e.g. course syllabus is unclear, there is no precise wording on AI use, AI declaration, etc.).

Library:

- How can accidental copyright violations be avoided?

- What does plagiarism mean?

- How can we use artificial intelligence ethically and responsibly?

- What tools are available and which AI can be used for different tasks?

- Technical tool management.

IT Department:

- What IT-related dangers does the use of artificial intelligence pose?

- Technical tool management.

Student services, learning support:

- How should the AI-related requirements and expectations be interpreted?

- How can we manage our fears and anxieties related to artificial intelligence?

- Methodological support for learning.

- Technical tool management.

Moodle course platform (maintained by the Centre for Teaching and Learning):

- What are the university-level ethical rules for AI use?

- Advice on where and how AI can be applied in the learning process.

- How can potential dangers be avoided?

AI Dean:

- Feedback on the Executive Board and Vice-Rector for Education Resolutions.

Resources: AI resources (uni-corvinus.hu)

The regulations and the recommended tool list may be updated from time to time – always check the latest version!

AI Contacts

To whom you may turn if you have further questions:

- Using AI in the context of a course: Course Leader

- AI use in the context of the thesis or diploma work: Programme Leader

- AI Tools: Colleagues at the Library

- Best practices (if you have a recommendation or a question): Artificial intelligence Integration Centre (miik@uni-corvinus.hu)

- Regulations: Dean for Artificial Intelligence – Dean.AI@uni-corvinus.hu